Joint collaboration between Zyphra, AMD, and IBM delivers ZAYA1, the first large-scale Mixture-of-Experts foundation model trained entirely on an AMD platform using AMD Instinct MI300X GPUs, AMD Pollara networking & ROCm software.

SAN FRANCISCO, Nov. 24, 2025 /PRNewswire/ -- Zyphra today announced a major milestone in its AI infrastructure and model development with the release of a technical report showing how Zyphra has demonstrated large scale training on AMD GPUs and networking.

The paper introduces ZAYA1, the first large-scale Mixture-of-Experts (MoE) foundation model trained entirely on an integrated AMD platform (AMD Instinct™ GPUs, AMD Pensando™ networking interconnect & ROCm software stack) as a viable high-performance, production-ready alternative platform for frontier-scale AI training.

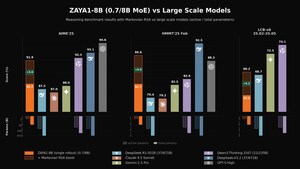

Despite operating at a fraction of the active parameter count, ZAYA1-base (8.3B total parameters, 760m active) achieves performance comparable to leading models such as Qwen3-4B (Alibaba) and Gemma3-12B (Google), and outperforms models including Llama-3-8B (Meta) and OLMoE across reasoning, mathematics, and coding benchmarks.

"Efficiency has always been a core guiding principle at Zyphra. It shapes how we design model architectures, develop algorithms for training and inference, and choose the hardware with the best price-performance to deliver frontier intelligence to our customers," said Krithik Puthalath, CEO of Zyphra. "ZAYA1 reflects this philosophy and we are thrilled to be the first company to demonstrate large-scale training on an AMD platform. Our results highlight the power of co-designing model architectures with silicon and systems, and we're excited to deepen our collaboration with AMD and IBM as we build the next generation of advanced multimodal foundation models."

Mixture-of-Experts (MoE) models have become the foundational architecture for modern, frontier AI systems, using specialized expert networks that activate dynamically to deliver greater efficiency, scalability, and reasoning performance than traditional dense architectures. This paradigm shift defines today's leading frontier models including GPT-5, Claude-4.5 DeepSeek-V3 and Kimi2 all of which leverage MoE designs to expand capability while optimizing compute utilization. ZAYA1 represents the first large-scale pretraining of an MoE model on an AMD platform, demonstrating that the AMD AI ecosystem is ready to power frontier-class AI development end-to-end.

Zyphra co-designed ZAYA1 around AMD silicon, introducing innovations such as an advanced routing architecture, Compressed Convolutional Attention (CCA), and lightweight residual scaling to achieve higher training throughput and more efficient inference through improved expert utilization.

"Zyphra's work with AMD and IBM demonstrates how an open platform built on AMD Instinct GPUs and AMD Pensando networking can deliver breakthrough performance and efficiency for large-scale AI," said Philip Guido, EVP and Chief Commercial Officer, AMD. "This milestone underscores how innovative AMD hardware and software solutions are enabling the next wave of frontier AI development with industry leaders."

Building on prior collaborative work and to achieve this milestone, Zyphra collaborated closely with AMD and IBM to design and deploy a large-scale training cluster powered by AMD Instinct GPUs with AMD Pensando networking (ethernet) interconnect. The jointly engineered AMD and IBM cluster announced earlier this quarter, combines AMD Instinct MI300X GPUs with IBM Cloud's high-performance fabric and storage architecture providing the foundation for ZAYA1's large-scale pretraining.

"As AI creates opportunities for enterprises to innovate, foundation models are key to unlocking accelerated development, efficiency and productivity," said Alan Peacock, GM of IBM Cloud. "We are proud to deliver IBM's scalable AI infrastructure as the foundation for ZAYA1's large-scale model and are excited to continue collaborating with AMD on AI model development across our mutual clients."

The joint collaboration demonstrates how Zyphra's advanced AI research and optimized software stack, combined with the AMD platform powered by IBM's infrastructure through IBM Cloud can deliver the performance needed for reliable frontier-scale AI model development.

For more information please reference the technical report on arXiv, the Zyphra technical blog post, and AMD blog post.

About Zyphra

Zyphra is a full-stack, open-source superintelligence company based in San Francisco on a mission to build human-aligned AGI.

Zyphra's core research thesis toward general superintelligence is focused on developing next-generation multimodal architectures for long-context reasoning, long-term memory, and continual learning.

The company is building two products:

Zyphra Inference Cloud - an API inference platform for Zyphra's multimodal models (language, audio and vision) and other leading open-source models.

Maia - an intelligent assistant for teams that enhances collaboration by bringing search, communication, and productivity tools together in one platform.

Zyphra's team is committed to democratizing advanced AI systems, exploring novel architectures at the frontier of silicon performance, and advancing the scientific understanding of powerful models through open source and open science. Visit www.zyphra.com for more information.

Media Contact:

Paul White

VP Business Development

[email protected]

www.zyphra.com

SOURCE Zyphra

Share this article