BOSTON, Oct. 19, 2021 /PRNewswire/ -- It is widely accepted that around 95% of road traffic accidents are caused by human drivers. We are fallible, distracted, and potentially dangerous. Our liability behind the wheel is not just the problem of other human drivers, but can also be difficult for autonomous vehicles to contend with.

Every company testing autonomous vehicles in California must report any collision to the California DMV. The new IDTechEx report "Autonomous Cars, Robotaxis & Sensors 2022–2042" provides analysis on this data, spanning the last two and a half years' worth of the reports. It is not looking good for human drivers.

From 187 reports, only 2 incidents could be attributed to the poor performance of the autonomous system. That means a staggering 99% of crashes involving autonomous vehicles are caused by human error. It is also worth mentioning that while the vehicles were operating in autonomous mode, 81 out of the 83 recorded incidents were caused by a human, either in another vehicle or as a misbehaving pedestrian.

The results, shown in the graphic, only tell half the story though. When delving into individual cases the ineptitude of some drivers becomes glaringly obvious. Here are four example cases where it would simply be impossible for two autonomous vehicles to have the same collision. These cases are summaries of real incidents documented and available on the California DMV website.

Case 1 (Pony.ai, 12th July 2019)

At a traffic light-controlled junction, the autonomous test driver notices the car in front has its reverse lights on. As a precaution, the test driver backs up 20-30 feet. When the lights turn green, the car in front accelerates in reverse, they manage to stop short of the Pony.ai vehicle by 4 feet. Without changing out of reverse, the driver in front accelerates again and hits the Pony.ai vehicle.

Case 2 (Cruise, 27th March 2021)

Here a Cruise vehicle was operating in autonomous mode, making a left turn at a 4-way junction when a vehicle behind attempted to overtake and carry straight on. The vehicle collided with the front left of the autonomous Cruise.

Case 3 (Cruise, 25th February 2021)

The Cruise vehicle, operating in autonomous mode was making a right turn at a junction and had right of way. A vehicle approaching from the left failed to stop for a stop sign and collided with the autonomous car.

Case 4 (Zoox, 22nd November 2019)

The autonomous vehicle was being driven by the human test driver in manual mode. The test driver entered a junction under a green light and was hit by another vehicle illegally entering the junction while fleeing from the local police force.

The cases mentioned here are some of the more unusual and have been shared to demonstrate how poor human driving can sometimes be. In the vast majority of the 81 cases where a human driver collided with an autonomously driven vehicle, the circumstances were far less interesting. The most common form of collision was simply being rear-ended while in traffic or stopped. These kinds of crashes are likely caused by either human inattention or distraction.

The point remains though that in the cases given and most other cases, it is highly unlikely that a collision would have occurred if both vehicles were autonomous. Autonomous drivers don't mistake forward and reverse gears, they don't take unnecessary risks such as overtakes and running stop signs, they always pay 100% attention, and finally, they won't flee from the police.

What about the two cases where the autonomous vehicle was at fault? Both cases involved a Zoox vehicle, and in both cases, the Zoox autonomous system appeared to misjudge its clearance to parked vehicles it was attempting to navigate around. The Zoox vehicles made contact causing some minor damage. It is likely that this was either a weakness or blind spot in the sensor suite, or a fault of the autonomous driver. Either way, as R&D activities are still underway, autonomous vehicles are not yet 100% infallible, and likely never will be. More information about the current maturity of robotaxi players can be found in IDTechEx's latest report, "Autonomous Cars, Robotaxis & Sensors 2022–2042".

Part of the problem could be assumed behavior, where the human driver expects the autonomous driver to go and they start moving in anticipation, only to hit the still stationary autonomous vehicle. Like being caught out by a learner driver not moving at a roundabout or other junction; sometimes there looks to be a sufficient gap and the driver begins to move, expecting that the learner has gone for it, but the overly cautious learner is still there.

To further cement this point, while studying disengagement reports from robotaxi leader Waymo, IDTechEx found that approximately 20% of disengagements were caused by the test driver reacting to a nearby human driver's poor behavior. This could be failing to abide by the rules and etiquette of the road, or other drivers behaving aggressively towards the autonomous vehicle. Similar incidents were recorded in the collision reports, for example, a couple of pedestrians attacked two Cruise vehicles with wooden sticks in a completely unprovoked display of aggression.

Autonomous and connected vehicles have many advantages over human drivers. They have permanent 360° perception, they can communicate their intentions to each other in advance, and they do not make silly operational errors (such as accidentally engaging reverse). As their maturity progresses it will be hard to deny that they are superior to human drivers, and we will need to take a back seat in the task of driving.

IDTechEx Mobility Research

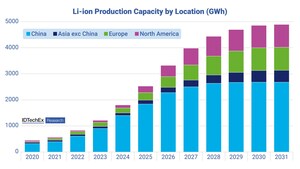

IDTechEx is actively researching MaaS and autonomy and has just released an updated report "Autonomous Cars, Robotaxis & Sensors 2022–2042".

This research forms part of the broader mobility research portfolio from IDTechEx, who track the adoption of autonomy, electric vehicles, battery trends, and demand across land, sea and air, helping to navigate whatever may be ahead. Find out more at www.IDTechEx.com/Research/EV.

About IDTechEx

IDTechEx guides your strategic business decisions through its Research, Subscription and Consultancy products, helping you profit from emerging technologies. For more information, contact [email protected] or visit www.IDTechEx.com.

Images download: https://www.dropbox.com/sh/5pd9xp7lalq8dul/AACmPvV1XKMEdMvB6irAYAOia?dl=0

Media Contact:

Natalie Moreton

Digital Marketing Manager

[email protected]

+44(0)1223 812300

Social Media Links:

Twitter: https://www.twitter.com/IDTechEx

LinkedIn: https://www.linkedin.com/company/idtechex/

Facebook: https://www.facebook.com/IDTechExResearch

SOURCE IDTechEx

Share this article